When interpreting p-values, remember they show the chance of getting results as extreme as yours, assuming there’s no real effect. A small p-value suggests the result is unlikely under the null hypothesis, but it doesn’t tell you how important or meaningful the effect is. Don’t confuse statistical significance with practical significance. To fully understand your findings, it’s helpful to examine effect size and alternative methods like Bayesian analysis—exploring these will give you a clearer picture.

Key Takeaways

- A small p-value indicates data are unlikely under the null hypothesis but doesn’t confirm practical importance.

- P-values do not measure effect size or real-world significance.

- Combining p-values with effect size provides a clearer understanding of the findings’ relevance.

- Bayesian methods offer a probabilistic view of hypotheses, complementing traditional p-value interpretation.

- Sample size affects p-value and effect size; consider both for accurate data interpretation and decision-making.

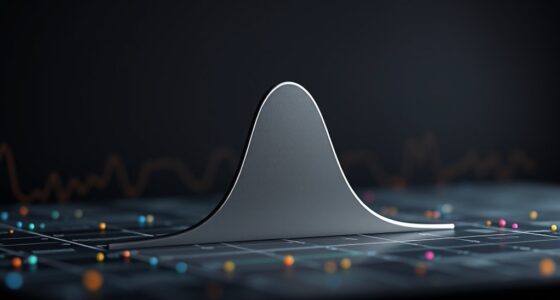

Have you ever wondered how scientists determine whether their findings are truly significant? It’s a question that gets to the heart of scientific research and how we interpret data. Traditionally, p-values have been used as a primary tool to assess whether results are statistically significant. However, understanding what a p-value really tells you can be tricky. P-values measure the probability of obtaining results as extreme as, or more extreme than, what you observed, assuming the null hypothesis is true. This doesn’t directly tell you if the effect is meaningful or large, just whether it’s unlikely to have occurred by chance alone. That’s where effect size interpretation comes into play, giving you a clearer picture of the practical importance of your findings.

In recent years, Bayesian methods have gained popularity as an alternative or complement to traditional p-value analysis. Unlike p-values, Bayesian approaches incorporate prior knowledge or beliefs, updating these with new data to produce posterior probabilities. This provides a more nuanced view of how likely a hypothesis is, given the evidence. Bayesian methods can help you move beyond the binary “significant or not” mindset, offering probability statements about the effect or hypothesis directly. When combined with effect size interpretation, Bayesian methods can give you a richer understanding of your data. For example, instead of just noting that a p-value is below 0.05, you can assess the probability that the effect size exceeds a practically meaningful threshold, considering your prior information. This approach aligns more closely with how you might naturally interpret evidence in real-world decision-making, where effect size and probability matter.

Understanding p-values in the context of effect size interpretation means recognizing that a small p-value doesn’t automatically imply a large or important effect. You might find a statistically significant result that has a trivial effect size, meaning it’s unlikely due to chance but doesn’t translate into meaningful impact. Conversely, a non-significant p-value could hide a substantial effect if the sample size is too small to detect it confidently. Bayesian methods can help clarify these situations by providing probability distributions for effect sizes, allowing you to see not just whether an effect exists, but how large it likely is and whether it’s practically relevant. Additionally, considering the sample size is crucial because it influences the power to detect effects and affects the interpretation of p-values and effect sizes.

Ultimately, interpreting statistical significance isn’t just about crossing an arbitrary threshold. It’s about understanding the magnitude of the effect, the certainty around it, and how the evidence fits within your existing knowledge. By integrating Bayesian methods and effect size interpretation into your analysis, you gain a more complete, accurate picture of what your data truly reveal. This approach empowers you to make better-informed decisions and avoid common pitfalls associated with relying solely on p-values.

Frequently Asked Questions

How Do P-Values Relate to Confidence Intervals?

You can think of p-values and confidence intervals as two sides of the same coin in hypothesis testing and statistical inference. When a p-value is small, it suggests your data strongly supports rejecting the null hypothesis, and the corresponding confidence interval won’t include the value under the null. Conversely, if the confidence interval includes that null value, it indicates the p-value is likely large, showing less evidence against the null hypothesis.

Can P-Values Determine Practical Significance?

P-values alone can’t determine practical significance because they focus on statistical significance, not real-world impact. You need to contemplate the practical relevance of the findings, such as effect size and context, to understand their real-world impact. Just because a p-value indicates a statistically significant result doesn’t mean it’s meaningful in practice. Always evaluate the effect’s magnitude and relevance to make informed decisions about its practical significance.

What Are Common Misinterpretations of P-Values?

Misconceptions about significance are like mistaking a map’s detail for the territory itself. You might think a low p-value proves a real effect, but it’s just a misinterpretation of probability, not proof of importance. People often overlook that p-values show only the likelihood of observing data if there’s no effect, leading to overconfidence in results. Remember, a p-value isn’t the ultimate truth, just a piece of the puzzle.

How Should P-Values Influence Decision-Making?

You should use p-values as part of your decision criteria by comparing them to the threshold significance, often set at 0.05. If your p-value falls below this threshold, you can consider the results statistically significant and potentially act on them. However, don’t rely solely on p-values; consider the broader context, study design, and practical importance to make well-informed decisions.

Are P-Values Affected by Sample Size?

Did you know that larger sample sizes can make p-values more likely to indicate significance? A bigger sample size reduces variability, which often lowers the p-value. So, the p-value is affected by sample size—you might get a significant result with a large sample even if the actual effect is small. Always consider how your sample size influences your p-value before drawing conclusions about your data’s significance.

Conclusion

Think of p-values as your statistical compass, guiding you through the fog of data. When small, they light a clear path, hinting at genuine effects; when large, they leave you wandering uncertainly. Remember, they’re just one part of the story, not the whole map. So, trust your intuition and context—like a seasoned traveler—using p-values to navigate the vast landscape of research with confidence and clarity.