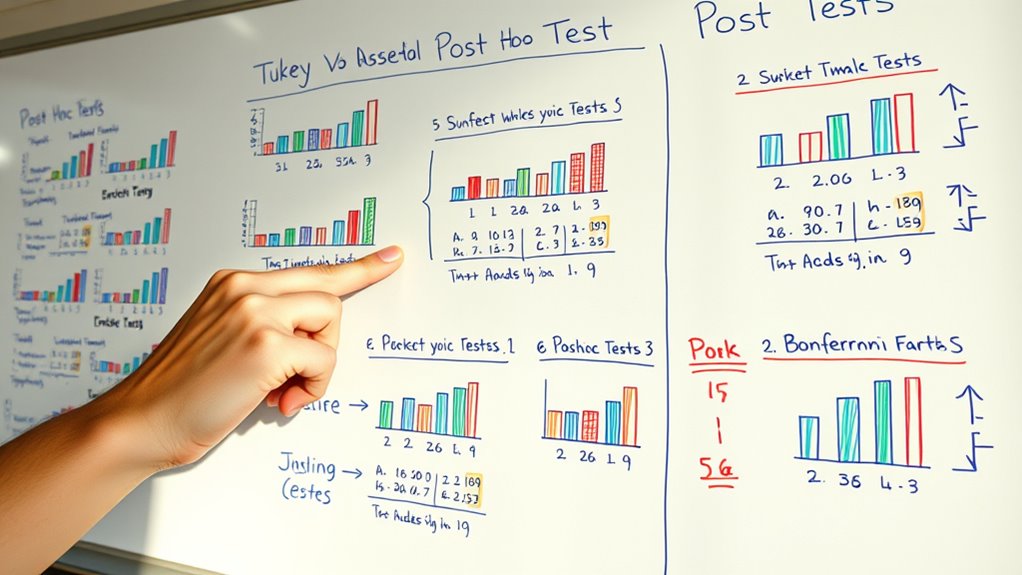

Post hoc tests help you identify exactly which groups differ after an ANOVA shows a significant overall effect. Tukey’s HSD compares all pairs to find specific differences, while Bonferroni adjusts the significance threshold to control false positives when making multiple comparisons. These methods guarantee you avoid mistakenly claiming random differences are significant. Understanding how each controls error rates and when to use them will give you clearer, more reliable results in your analysis.

Key Takeaways

- Post hoc tests identify specific group differences after a significant ANOVA result.

- Tukey’s HSD offers balanced pairwise comparisons with controlled error rates.

- Bonferroni correction adjusts significance levels by dividing alpha, reducing false positives.

- Multiple comparison methods help manage increased error risk from numerous tests.

- Choosing the appropriate post hoc test depends on study design and desired error control.

When you conduct an analysis of variance (ANOVA), you might find a significant difference among your groups, but it doesn’t tell you exactly where those differences lie. That’s where post hoc tests come into play. After establishing that at least one group differs from the others, you need a way to explore those differences further. This process involves pairwise comparisons, where you compare each group against every other group to pinpoint specific differences. However, performing multiple comparisons raises a critical concern: the risk of Type I errors, or false positives. To address this, post hoc tests incorporate error control methods, which adjust the criteria for significance to keep the overall error rate in check.

Pairwise comparisons are essential because they break down the broader ANOVA result into detailed insights. Without them, you’d know only that differences exist somewhere among your groups but not which groups are responsible. These comparisons involve conducting multiple statistical tests, each examining a specific pair of groups. The challenge is that with many comparisons, the chance of falsely declaring a difference significant increases. For example, if you run 20 tests at a 5% significance level, you could expect one false positive just by chance. This is why error control becomes *vital*—methods like the Bonferroni correction or Tukey’s HSD are designed to adjust the significance thresholds, reducing the likelihood of these false positives. Understanding the importance of error control methods helps ensure the reliability of your results.

Error control strategies modify the p-value thresholds for each comparison to maintain the overall confidence level across all tests. The Bonferroni method, for instance, divides the desired alpha level (like 0.05) by the number of comparisons, making it more stringent to declare significance. While this reduces false positives, it also increases the chance of missing real differences (Type II errors). Alternatively, methods like Tukey’s HSD are tailored for pairwise comparisons in ANOVA, balancing error control with statistical power. These tests are especially popular because they are straightforward and designed specifically for comparing all possible pairs of group means, providing clear guidance on which differences are statistically significant.

Tukey HSD post hoc test software

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Frequently Asked Questions

How Do I Choose the Right Post Hoc Test for My Study?

You choose the right post hoc test by considering your study’s pairwise comparisons and test assumptions. If you have equal variances and sample sizes, Tukey’s test works well. For more conservative results, Bonferroni is suitable. If assumptions aren’t fully met, consider tests like Games-Howell. Think about your data’s characteristics and the level of control you need over Type I errors to pick the best test for your study.

Are Post Hoc Tests Appropriate for All Types of Data?

Post hoc tests aren’t suitable for all types of data because they rely on specific data assumptions, like normality and homogeneity of variances. If your data violate these test limitations, the results may be invalid. Always check whether your data meet these assumptions before applying post hoc tests. If they don’t, consider alternative methods or non-parametric tests to guarantee accurate, reliable conclusions.

Can Post Hoc Tests Be Used With Small Sample Sizes?

Think of small sample sizes as tiny boats steering stormy seas—you can try post hoc tests, but they’re more prone to misleading ripples. With small data sets, sample size limitations make results less reliable, increasing the risk of Type I errors. While you can attempt post hoc tests, be cautious; they often lack the power needed for accurate conclusions in small samples. Consider alternative methods better suited for limited data.

What Are the Limitations of Tukey and Bonferroni Tests?

You should know that Tukey and Bonferroni tests have limitations in multiple comparison situations. They can be overly conservative, reducing your chances of detecting true differences (power), especially with small sample sizes. While they control the risk of Type I error effectively, this strict control can increase the likelihood of missing real effects. So, use these tests carefully, balancing between error control and the sensitivity needed for your analysis.

How Do Post Hoc Tests Affect the Overall Statistical Significance?

Post hoc tests are like superheroes saving your analysis from chaos by controlling the risk of false positives in multiple comparison situations. They adjust your statistical significance, making sure you don’t mistakenly declare differences when none exist. These corrections guarantee your results are trustworthy, but they can also make detecting true effects harder. Overall, post hoc tests refine your conclusions, balancing the risk of errors with the clarity of your findings.

Bonferroni correction calculator

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Conclusion

Understanding post hoc tests like Tukey and Bonferroni helps you make sense of complex data. Did you know that in one study, the Bonferroni correction reduced false positives by up to 50%? This highlights how choosing the right test safeguards your results’ accuracy. Next time you analyze data, remember that these tests aren’t just technical tools—they’re your allies in making confident, reliable conclusions.

ANOVA post hoc comparison tools

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

multiple comparison test software

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.