To master random forests for statistics like a pro, focus on understanding how ensemble trees improve prediction accuracy and reduce overfitting. Use feature importance metrics to identify key variables that influence your outcomes, and utilize visualization tools like partial dependence plots for better interpretation. This approach enhances model transparency and decision-making. By exploring these techniques further, you’ll access the full potential of random forests for clear, actionable insights.

Key Takeaways

- Utilize feature importance metrics to identify key variables influencing model predictions and enhance understanding.

- Employ visualization tools like partial dependence plots to interpret individual feature effects clearly.

- Leverage ensemble decision trees to improve model accuracy and reduce overfitting in complex datasets.

- Use Random Forests to troubleshoot model issues by detecting problematic features or data inconsistencies.

- Apply model insights to support transparent, data-driven decision-making and professional reporting.

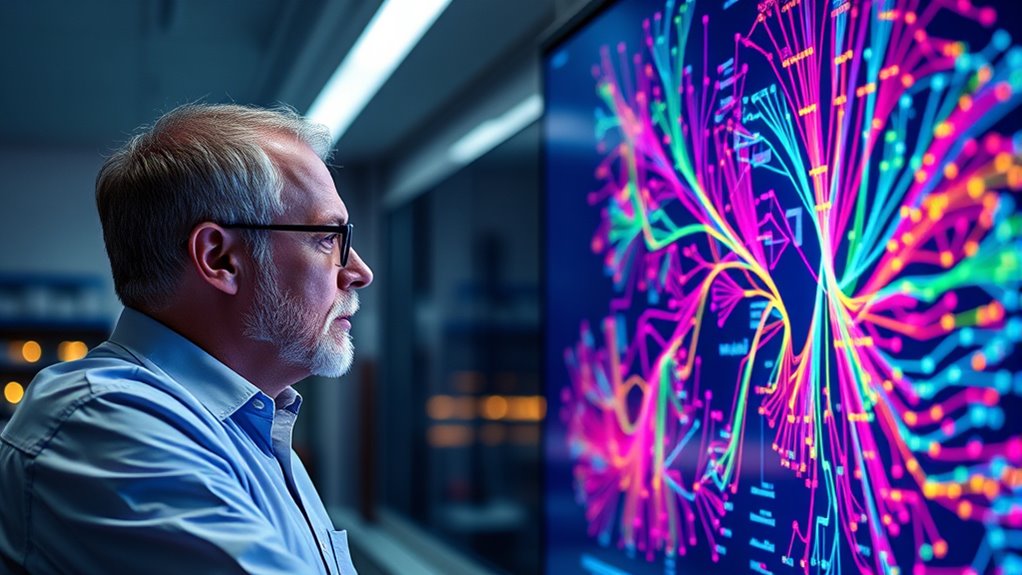

Random forests have become a powerful tool in statistical analysis, offering robust and flexible solutions for both classification and regression tasks. When you use a random forest, you’re leveraging an ensemble of decision trees that work together to improve prediction accuracy and reduce overfitting. One of the key advantages of this method is its ability to assess feature importance, helping you identify which variables most influence your model’s predictions. This insight is essential because it allows you to understand what drives your data, making your models more transparent and grounded in domain knowledge.

Feature importance measures, such as mean decrease in impurity or permutation importance, give you a clear ranking of your variables. This helps you focus on the most relevant features, streamline your models, and potentially improve their performance by removing noise variables. As you analyze your data, this insight into feature importance can guide feature selection, making your models leaner and more efficient. It also enhances model interpretability, which is fundamental when explaining your results to stakeholders or making data-driven decisions. Unlike some black-box models, random forests can offer tangible insights into how different features contribute to the outcome.

Feature importance helps identify key variables, streamline models, and improve interpretability for better insights and decision-making.

Model interpretability is often a concern with complex algorithms, but random forests strike a balance between performance and transparency. While they’re more complex than a simple decision tree, tools like feature importance rankings and partial dependence plots help demystify how the model makes predictions. You can visualize the effect of individual variables on the predicted outcome, making it easier to interpret the model’s behavior. This interpretability is especially valuable in fields like healthcare, finance, or any domain where understanding the why behind a prediction is just as important as the prediction itself.

Moreover, the ability to interpret your model enhances trust and facilitates troubleshooting. If your random forest model performs poorly or produces unexpected results, understanding feature importance can point you toward problematic variables or data issues. It also allows you to communicate your findings more effectively, translating complex model behavior into insights that non-technical stakeholders can grasp. Ultimately, mastering feature importance and model interpretability in random forests empowers you to build models that are not only accurate but also understandable and actionable, elevating your statistical analysis to a professional level. Additionally, understanding the contrast ratio and other key metrics helps you optimize your model’s predictive power and visual clarity.

Frequently Asked Questions

How Do Random Forests Handle Missing Data?

When handling missing data, you often use imputation techniques to fill gaps, but random forests manage missingness mechanisms differently. They can handle missing values internally by splitting based on available data, reducing bias. Alternatively, you might still apply imputation beforehand for better accuracy. This flexibility allows you to work effectively with incomplete datasets, ensuring your model remains robust even when data isn’t perfect.

Can Random Forests Be Used for Time Series Forecasting?

Imagine you’re trying to predict the future with a crystal ball, but the scene shifts with each passing moment. Random forests can be used for time series forecasting, but they don’t naturally account for temporal dependencies or stationarity assumptions. You’ll need to create lag features and guarantee data stationarity. Without these steps, the model might miss patterns, making your forecasts less reliable, like trying to read a moving shadow.

What Are the Limitations of Random Forests in Big Data Analysis?

You should know that random forests can struggle with big data analysis due to overfitting issues, especially if trees grow too complex. They also face computational scalability challenges, as training numerous trees on vast datasets demands significant processing power and memory. While they are powerful, these limitations mean you might need to ponder other algorithms or optimize your random forest setup to handle large-scale data efficiently.

How Do You Interpret Feature Importance in Random Forests?

When you interpret feature importance in random forests, you’re evaluating which variables influence your model the most. You can use interpretation methods like mean decrease impurity or permutation importance to gauge each feature’s impact. These methods help you understand the relative significance of features, making your model more transparent. By focusing on feature importance, you gain insights into your data, improving your ability to make informed decisions and refine your model effectively.

Are Random Forests Suitable for Small Sample Sizes?

You might wonder if random forests are suitable for small sample sizes. While they offer good predictive power, they can struggle with model interpretability in small datasets. Additionally, there’s a risk of overfitting, especially if you don’t regulate it well. To address this, you should tune hyperparameters carefully and use techniques like cross-validation, which help improve overfitting control and ensure your model remains reliable despite limited data.

Conclusion

Now that you understand how random forests blend simplicity with power, you’re like a skilled painter combining bold strokes with subtle details. Just as a forest seamlessly integrates diverse trees into a vibrant ecosystem, your statistical skills can grow into a rich, interconnected network of insights. Embrace this tool, and you’ll navigate complex data landscapes with confidence—seeing the forest and the trees, all in one clear, insightful view.