The Kolmogorov-Smirnov (KS) test helps you compare your data to a theoretical distribution or to another data set, checking how similar they are. You calculate the maximum difference between the empirical distribution of your data and the expected distribution, which shows how closely they match. A small difference means they are similar, while a large gap suggests they aren’t. If you’re curious about how this test works and when to use it, there’s more to discover.

Key Takeaways

- The Kolmogorov-Smirnov (K-S) test compares the empirical distribution of data to a theoretical distribution or between two samples.

- It measures the maximum difference (gap) between the empirical cumulative distribution function (EDF) and the theoretical CDF.

- A small p-value from the test indicates the data likely does not follow the hypothesized distribution.

- The test is non-parametric, making no assumptions about the data’s underlying distribution.

- It is most effective with large, continuous datasets and less suitable for small or discrete samples.

Have you ever wondered how to determine if a sample follows a specific distribution? The Kolmogorov-Smirnov (KS) test is a popular tool that helps you do just that. It’s designed to compare your sample data to a theoretical distribution or to compare two samples to see if they come from the same distribution. The core idea is to analyze the data distribution without making many assumptions, which makes it especially useful in real-world scenarios where the true underlying distribution is unknown.

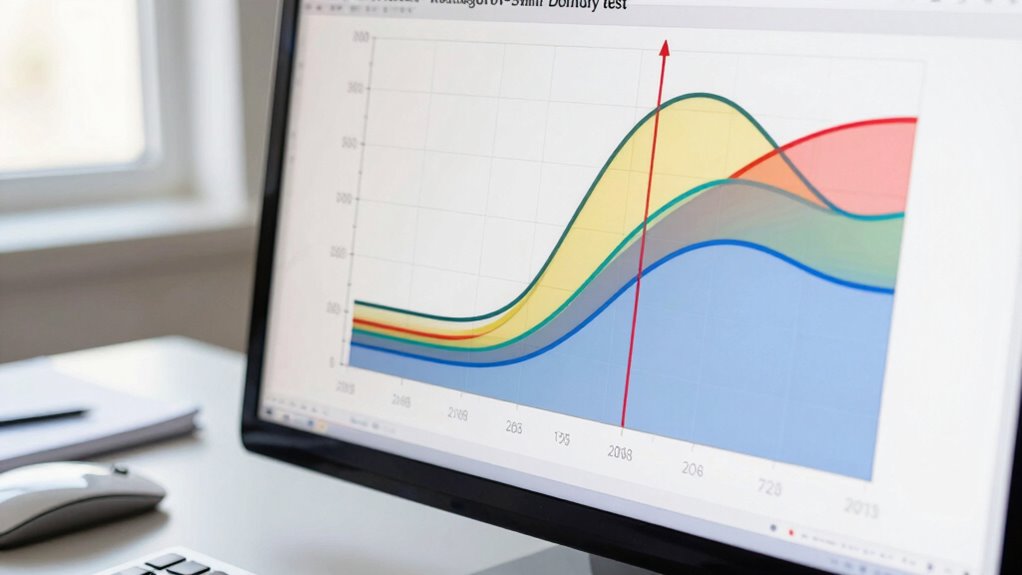

The KS test works by calculating the maximum difference between the empirical distribution function (EDF) of your sample and the cumulative distribution function (CDF) of the reference distribution. Think of the EDF as a step-by-step representation of how your data is spread out. The CDF, on the other hand, describes the theoretical distribution you’re testing against. By measuring the largest gap between these two functions, the KS test quantifies how well your data conforms to the expected pattern. If this gap is small, it suggests your data distribution aligns closely with the theoretical one; if it’s large, it indicates a discrepancy.

This process involves an empirical comparison, where the test relies on the data itself rather than relying solely on parametric assumptions. Because of this, the KS test is non-parametric and distribution-free, meaning it doesn’t require your data to follow a specific distribution beforehand. You simply compare your data’s empirical distribution to the hypothesized model. The test then produces a p-value, which helps you decide whether to accept or reject the null hypothesis—that is, whether your data follows the specified distribution. A small p-value indicates a significant difference, meaning your data likely doesn’t follow that theoretical distribution, while a large p-value suggests compatibility.

One of the reasons the KS test is so widely used is its simplicity and versatility. It applies to continuous data and can compare two samples to determine if they come from the same distribution. Its reliance on the maximum difference between empirical and theoretical distributions makes it straightforward to implement and interpret. When you want to validate assumptions about your data or check the goodness-of-fit, the KS test provides a quick, reliable way to do an empirical comparison. Just keep in mind that it is most effective with large sample sizes and continuous data, and there are some limitations when it comes to discrete data or small samples. Additionally, understanding the contrast between DLP and LCD technology can influence how you interpret color fidelity in your data visualizations.

In essence, the Kolmogorov-Smirnov test offers you a clear, concise way to assess data distribution conformity, giving you confidence in your analysis and helping you make informed decisions based on the empirical comparison between your sample and the theoretical model.

Kolmogorov-Smirnov test software

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Frequently Asked Questions

How Does the KS Test Compare to Other Goodness-Of-Fit Tests?

The KS test stands out because it compares your sample directly to a reference distribution without heavy statistical assumptions, unlike chi-square or Anderson-Darling tests that often require specific data conditions. It’s especially useful for distribution comparison, as it is sensitive to differences in shape and location. While other tests might demand larger samples or specific data types, the KS test remains flexible and straightforward for various data sets.

Can the KS Test Be Used for Small Sample Sizes?

The KS test can be used for small sample sizes, but you should be aware of its limitations. With limited data, the test’s power decreases, making it harder to detect true differences. Small sample sizes can lead to less reliable results, so you might consider alternative methods or larger samples if possible. Always interpret the results cautiously, understanding the test’s limitations in such cases.

What Are Common Pitfalls When Applying the KS Test?

Don’t put all your eggs in one basket; applying the KS test without considering sample size considerations and test assumptions can lead to misleading results. A common pitfall is using it with small samples, which reduces power, or ignoring distribution assumptions, skewing outcomes. Make sure your data meets the test’s requirements, and don’t overlook the importance of adequate sample size to avoid jumping to wrong conclusions.

Is the KS Test Suitable for Categorical Data?

The KS test isn’t suitable for categorical data because it’s designed for continuous distributions, and categorical limitations prevent it from accurately comparing categories. Instead, you should use alternative tests like the Chi-square test or Fisher’s exact test, which are specifically tailored for categorical data. These tests handle the discrete nature of categories better, providing more reliable insights when analyzing categorical variables.

How Do I Interpret the P-Value in the KS Test?

You interpret the p-value in the KS test as a signal of significance or surprise. A small p-value indicates your data markedly differs from the expected distribution, urging caution or rejection of the null hypothesis. Conversely, a large p-value suggests your data aligns well with expectations, supporting the null hypothesis. Remember, interpretation nuances matter; a p-value isn’t a definitive truth but a guide to understanding data variability and potential differences.

goodness of fit test tools

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Conclusion

Now that you understand the Kolmogorov-Smirnov test, you’re ready to spot differences between distributions with confidence. But will your next analysis reveal surprises or confirm what you expect? The test’s simplicity masks its power—yet, it’s your choice to trust the results. So, next time data whispers secrets, will you listen and uncover the truth? The answer lies in your hands, waiting to be discovered.

empirical distribution function calculator

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

statistical hypothesis testing kit

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.